Beyond CPRI: planning for 5G fronthaul

Previously published on Light Reading

My last blog focused on physical layer network planning in preparation for 5G. This blog looks above the physical layer at mobile fronthaul protocols. Here the situation becomes far more complex, and currently there are a lot of moving parts that must come together. (See Preparing the Transport Network for 5G: The Future Is Fiber.)

The heart of the issue lies with the common public radio interface (CPRI) protocol, which transmits digitized RF data over optical fiber between the remote radio head (RRH) at the top of the cell tower and the base station below. First defined in 2003 and intended as a replacement for copper and coax cabling on cell towers, CPRI runs at data rates from 614 Mbit/s at the low end to more than 10 Gbit/s at the high end.

This CPRI/fiber connection between the RRH and baseband unit (BBU) has also been positioned as key to the centralized RAN architectures of the future. However, while there is little debate that fiber-based fronthaul is the future, there is concern that CPRI, as it stands, does not scale to the demands of 5G. Below we discuss three of the challenges that have come to light.

MIMO scaling

CPRI requires a dedicated link for every antenna -- whether it's a dedicated fiber or, more likely, a dedicated wavelength on a fiber. This dedicated link requirement becomes problematic as radio vendors invest in multiple-input multiple-output (MIMO) technology that uses multiple transmitters and receivers to transfer data simultaneously, thus increasing data rates for 4G and 5G radio. To illustrate, a 2x2 MIMO transmission with three vectors requires 12 CPRI streams, as each input, output and vector requires its own CPRI link (i.e., 12 wavelengths). A 4x4 MIMO transmission doubles the count to 24 CPRI links/wavelengths. In fact, MIMO counts proposed for 5G will be significantly higher than these numbers. Initially, DWDM vendors loved the idea of CPRI links and WDM wavelengths everywhere, but it has become clear to the industry that CPRI simply won't economically scale to the high antenna counts expected in 5G, due to MIMO.

Functional split

Given the scalability challenge described above, the industry understands that a more efficient fronthaul technology is required for 5G. However, what that technology ultimately might be remains a matter of intense debate right now. A critical point that must be resolved before the technology moves forward is the "functional split" separating which Layer 1, 2, and 3 functions reside in the RRH, and which of these processing functions reside at the BBU. With at least some Layer 2 functions (Ethernet) placed in the RRH, aggregation and statistical multiplexing can take place before data hits the fronthaul network -- thus reducing (and potentially greatly reducing) the number of wavelengths required for fronthaul transmission.

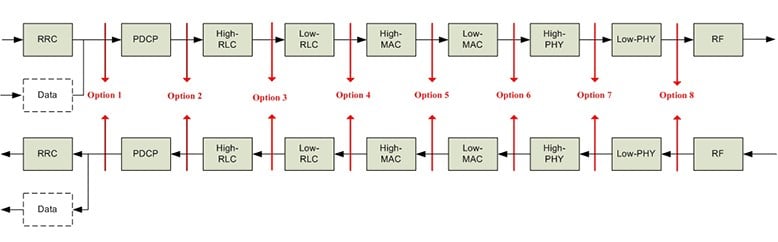

The Institute of Electrical and Electronics Engineers Inc. (IEEE) is working on standardizing Ethernet fronthaul (IEEE P.1914.1), and the 5G Infrastructure Public-Private Partnership (5GPPP) is also working on projects in this area. One challenge, however, is that there is no consensus on where the RRH vs. BBU functional split should take place, and there are currently eight split options under consideration (see below). It is most likely that several (though not all eight) split options will be defined in standards.

Figure 1: Functional Split Options for 5G

(Source: 3GPP)

The second challenge with functional splits is that there are tradeoffs relating to processing and latency. The more processing performed at the cell tower, the greater the latency introduced before transmission hits the BBU. We discuss latency in more detail below.

Latency

The C-RAN architecture itself contributes latency between the RRH and the BBU due to fiber distances. With short fiber links up a cell tower, latency is not a consideration, but C-RAN architectures can place the BBUs up to 20 km from the cell tower.

Coupled with distances, higher-layer processing done at the RRH (as described in functional splits) introduces even greater latency that must be carefully planned for and understood. A lower-layer split over CPRI is extremely low in latency, but inefficient in its use of bandwidth and costly. Using a higher-layer split makes it possible to use lower-cost packet transport for fronthaul, but also introduces greater latency. Certain 5G applications -- such as tactile Internet, augmented/virtual reality, real-time gaming and others -- will be sensitive to latency between the RRH and the BBU.

Future considerations

Requirements including latency, power loss and CPRI bit error rate, which may not be critical for 3G and LTE, will become major concerns as the industry migrates to LTE-Advanced Pro and 5G. Here, we have discussed the protocol options and element functional splits that are in play as the industry seeks the best way to meet tomorrow's 5G application requirements. Regardless of how the protocol scenarios play out, the physical fiber infrastructure being put in place will remain the same. In Part 1 of this blog, we covered the risks of chromatic dispersion (CD) and polarization mode dispersion (PMD) that are introduced as operators move to centralized RAN architectures, and as fiber spans between the RRH and BBU increase. All of these fiber characterization testing considerations still apply.

The key at the protocol layer is that operators must understand that fronthaul will no longer be dominated by CPRI alone, and that Ethernet transport will have a role to play. Other protocols, such as OTN-based fronthaul, may also emerge. Planning teams need to be flexible enough to adapt to and accommodate whichever scenarios ultimately play out.