Reveal the invisible with the EXFO service assurance platform

The need for a new approach to service assurance

Whether it’s the advent of 5G networks and the complexity that they introduce, or the explosion in fiber to the premises (FTTP) brought on by remote work, video streaming and online gaming, the status quo approach to service assurance is no longer viable.

With so much of future 5G revenues dependent on service level agreements, and service providers largely blind to 98% of customer-impacting events, the need for effective service assurance to cut through the noise and deliver superior network visibility is greater than ever. In fact, 65% of MNOs say that a lack of actionable insight is preventing them from automating networks and fault resolution, essential to meeting demanding performance expectations in enterprise applications.1

Service providers currently struggle to make sense of and act on network performance data in a timely manner. Consequently, network operations personnel are spending more time troubleshooting and isolating the fault domain than improving the customer experience. In fact, it now takes an average 12 people working together over 3 hours to resolve complex performance impairments.

Root cause analysis in the context of diverse tech stacks and imperfect observability of telecom infrastructure is challenging enough. Doing it at the speed of customers is truly a feat where problems occur and disappear in the blink of an eye.

As service providers work to deliver superior network performance and differentiated subscriber quality of experience (QoE) in the context of new technical architectures and new point technologies—while maintaining existing generations of radio networks and value-added services—they are struggling with their existing service assurance toolsets, which weren’t designed with such complexity in mind. These drivers of complexity include:

- cloudification of network functions with dynamic orchestration

- disaggregation of the RAN with logical and physical separation of the distribution unit (DU), the radio unit (RU) and the central unit (CU)

- separation of the user and control planes (CUPS)

- dynamic service-based architecture (SBA) software deployments in place of dedicated network equipment and monolithic applications

- network densification with the deployment of small cells in dense urban environments to deliver performant service at higher radio frequencies

- more fiber deployments across front-haul, mid-haul and back-haul to meet the densification needs of wireless networks and the need for more fiber to the premises (FTTx) to address work from home and remote learning scenarios

- mobile edge computing (MEC), which takes computing resources out of the data center and pushes them out to the edge of the network; service providers are counting on MEC as a major revenue generator, so it can be expected that absolute volumes will grow significantly

- 5G network slicing backed by stringent service level agreements (SLAs); some of these will form the basis for private network initiatives

- the need for precision timing synchronization along the service path between the core and the RAN as the absolute number of network links and deployed systems grow exponentially

- use cases and services that rely upon 5G capabilities such as ultra-reliable low latency communications (URLLC), enhanced mobile broadband (eMBB) or massive machine type communications (mMTC); these are typically backed by SLAs and are seen as important revenue generators for 5G networks

The status quo approach to service assurance is no longer feasible due to the overwhelming amounts of performance and telemetry data being generated by the different network domains, layers and services. As a result, there is no single source of truth regarding network performance or the customer experience. The status quo is also challenged by increased expectations about network performance, specifically requirements for low latency and high throughput governed by enterprise-grade SLAs, which mean that historic MTTR intervals are no longer tenable.

Imagining a new approach to service assurance

For the all the reasons discussed above, a new approach to service assurance is required. However, what exactly would that look like? How would it differ from today’s service assurance solutions? How would it deliver improved network visibility?

First off, it would abstract the complexity of the network while offering 100% visibility from core to RAN to subscriber, as well as the services and applications being used. It could be deployed in physical, virtual and cloud-native contexts all along the service path, all reporting to a centralized cloud-native solution displaying key network performance and quality of service (QoS) indicators and alerts from a single pane of glass.

It would provide support for multiple generations of radio networks (2G/3G/4G/5G) across a broad variety of vendors. It would also support different fiber network topologies.

Faced with a deluge of performance and telemetry data and triggered alarms, it would enable service providers to cut through the clutter, made possible by the application of artificial intelligence and machine learning. It would detect anomalies in near real-time and it would correlate inputs from across the network to aid in root cause analysis. It would help to prioritize responses by considering the volume of affected customers and their relative importance.

It would provide context (such as dynamically generated network topology) alongside actionable insight, enabling personnel to quickly locate and diagnose the source of an impairment and take action, cutting down on mean time to repair (MTTR). It would provide clear and rapid responses regarding the fault domain, reducing finger-pointing and the unnecessary dispatching of personnel to the field. Where a truck roll was necessary, personnel would benefit from geo-located identification of the impairment.

Building on the ability to make sense of the data, this new generation of service assurance solution would provide the ability to predict outages as well, enabling pro-active efforts to ensure service availability and performance.

It would also be fully orchestratable with the ability to spin up and tear down probes in seconds. Finally, it would be modular, with the ability to interact with third-party service assurance solutions via application programming interfaces (APIs) and northbound interfaces.

There are several benefits to such an approach:

- improved network performance

- shorter meant time to repair/resolve

- better customer quality of experience

- lower customer churn (and higher Net Promoter Score results)

- reduced OPEX costs as well reduced need for promotions to counter customer churn as well

- confidence when launching new services

The EXFO adaptive service assurance platform: reveal the invisible

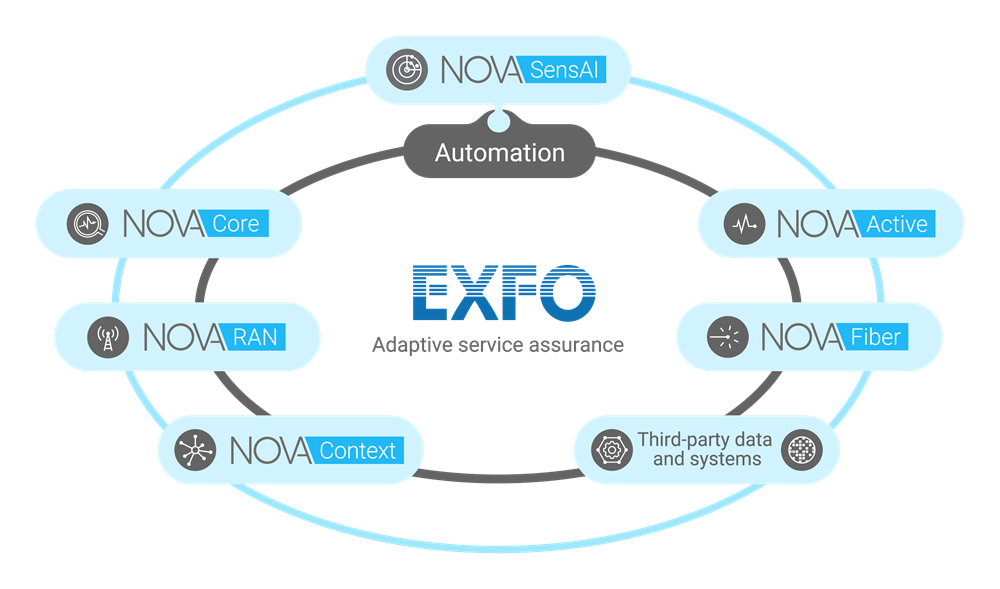

The EXFO adaptive service assurance platform enables service providers to achieve—and act upon—end-to-end visibility across multi-generation, multi-vendor technology stacks. It supports physical, virtualized and cloud-native network functions with a range of deployment and automation scenarios.

EXFO adaptive service assurance platform components include:

- Nova SensAI — AI and ML-powered anomaly detection and case correlation, operational analytics, service analytics and customer analytics

- Nova Core — multi-generation passive monitoring solution with comprehensive metrics (KQIs) for apps, voice and video

- Nova RAN — support for multi-vendor, multi-generation RAN with tools for troubleshooting, optimization and virtual drive testing

- Nova Context — generation of dynamic and static network topologies across physical and virtual infrastructure with optional geo-location; provision services based on optimal pathways

- Nova Active — synthetic testing available in physical, virtual and cloud-native form factors; support for micro-services

- Nova Fiber — testing of fiber networks during build out and creation of birth certificates capturing initial performance levels; ongoing monitoring of fiber networks with geo-location providing fault identification within as little as a meter

The EXFO adaptive service assurance platform features adaptive data sampling to cut through the noise, drastically reducing the volume of data that needs to be captured, transmitted and stored for analysis. It measures only what’s needed, where it’s needed, when it’s needed, delivering the small data that enables actionable insight.

In addition, the EXFO platform correlates events across domains to identify fault origination and speed resolution, making relevant data available at the click of a mouse. It provides deep context for decision-making, tying network performance to QoE and other KQIs. The EXFO service assurance platform also features support for third-party data and systems. EXFO solutions feature APIs and northbound interfaces for integration with BSS and OSS systems.

Consequently, the EXFO adaptive service assurance platform enables service providers to reveal the invisible aspects of their network performance and to take action on them sooner, while providing the unique levels of insight that come from an end-to-end view of the network.

For over two decades, leading service providers around the world have trusted EXFO service assurance technology to turn up, test, troubleshoot, optimize and automate their networks.

Learn more about how EXFO adaptive service assurance sheds light on the invisible factors driving network performance while delivering exceptional customer experience.